|

Neuro-Energetics: Capillary-Astrocyte-Neuron Model

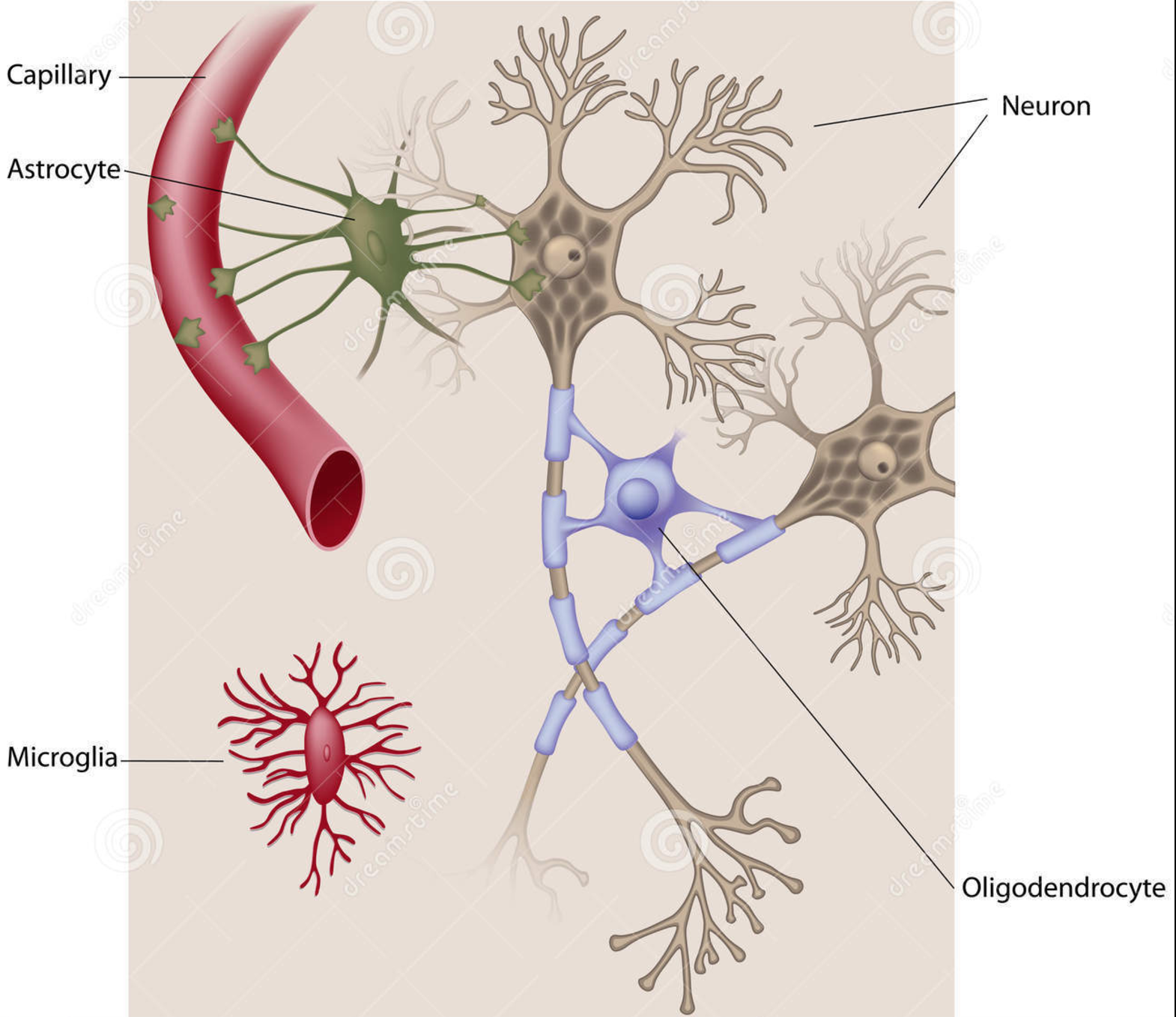

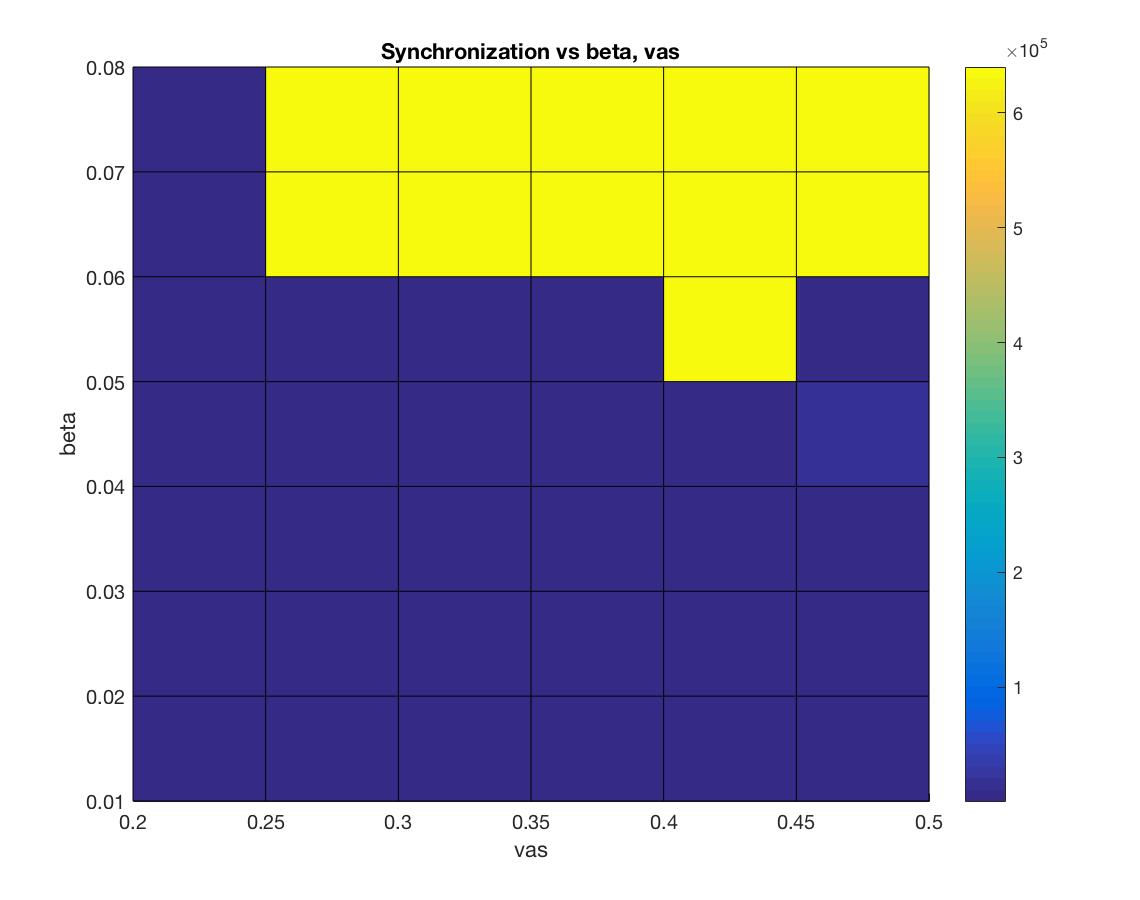

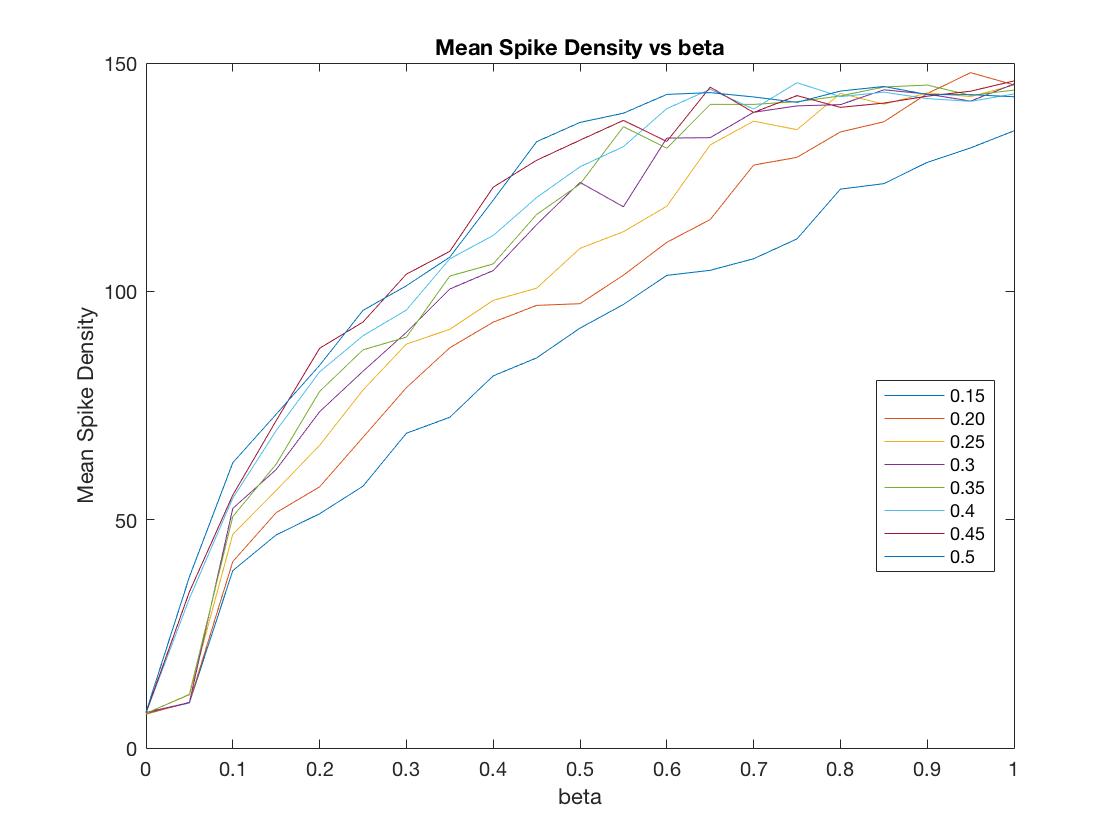

For this undertaking, we are focused on studying, modeling, and simulating the ways in which the human brain manages its energy supply. With the continuing increase in the computational power and connectedness of our digital society comes the corresponding need to curtail the energy consumption of its devices and networks. Engineers are now looking to the highly energy efficient human brain for inspiration in guiding the next generation of computing architectures. As the world continues to become more “plugged in” and dependent on the world-wide physical network architecture that serves up social media, email and, more generally, the larger web-based culture we are compelled to interface with every day, attention has begun to focus on how to mitigate the massive energy cost to the planet that such an enormous computational infrastructure demands. Considering the small word and scale free similarities in the network architecture of such massively parallel, interconnected systems such the internet and the human brain, researchers are now looking to the biological wisdom of the brain in an attempt to glean insight as to how to better design massively parallel network architectures to be energy efficient. Case in point, the most recent (June,2017) issue of the popular trade periodical IEEE spectrum is a special issue on the recent progress in modeling the brain in physical hardware. One of the primary themes that cut across several articles in this issue is the importance, even necessity, of utilizing in the construction of these new machines the mechanisms through which the biological brain manages its energy stores. Understanding how the brain does so is essential if we are to reproduce it’s functional properties in an energy efficient manner.  So important is understanding and exploiting the mechanisms the brain uses to maximize energy efficiency, that several of the contributing authors have deemed it essential to moving the future of artificial intelligence (AI), and even computing in general, forward. For example, in the article entitled, “The dawn of the real thinking machine,” Fred Rothganger states, “…with an average basal metabolism of 100 watts, each of us is equipped with the biological equivalent of a 20 watt supercomputer. Even today’s most powerful computers, running at 20 million watts, can’t come close to matching the brain.” Karlheitz Meier, in his article, “The brain as computer,” discusses a simulation of a small region of the brain on the K supercomputer in japan, stating, “Simulating 1.73 billion neurons consumed 10 billion times as much energy as an equivalent size portion of the brain.” He goes on to say, “No matter how much energy a conventional computer uses, it will struggle with feats the brain finds easy….If we could create machines with the computational capacity and energy efficiency of the brain, it would be a game changer.” Finally, Jennifer Hasler, in her article entitled, “A road map for the artificial brain,” states, “…the energy efficiency of digital computing is fast approaching its limits. Our data centers and supercomputers already draw megawatts—some two percent of the electricity consumed in the United States goes to data centers alone. The human brain, by contrast, runs quite well on about 20 watts…If we want to keep improving computing, we will need our computers to become more like our brains.” While it is clear from the above cries and from similar cries from across the AI landscape calling for a clearer understanding of how it is that the human brain manages its energy resources in order to produce intelligent thought and behavior at a minimal cost, such research efforts are conspicuously absent in the cognitive neuroscience and AI literature. In fact, there currently exists essentially no comprehensive research efforts that attempt to model how the metabolic nutrient exchange in the brain relates to the varied hierarchical brain activity states. These varied brain activity states are: 1) microscopic single-spiking neuron behavior, 2) mesoscopic local-field potential population dynamics, and 3) more global, macroscopic intra-hemispheric and cross-hemispheric cortical interactions that identify “resting-state networks (RSN)” in the human brain. In answering this call for a more comprehensive analysis as to how the human brain manages its energy resources in order to produce intelligent thought and behavior, we at the Biologically inspired neural and dynamical systems (BINDS) laboratory at the University of Massachusetts, Amherst have developed a working mathematical model/computer simulation of how the brain accomplishes this task. This model, what we call the Capillary-Astrocyte-Neuron” (CAN) model, simulates the metabolic fluxes in cortical neuropil related to glucose and oxygen delivery through the cerebral blood flow (CBF), the removal of heat and carbon dioxide, the production and maintenance of glycogen stores in supporting astrocyte cells, the flux/delivery of energy precursor molecules to the neuron from the astrocyte glycogen stores, the utilization of such energy from the astrocytes in the production of ATP in the neuron and, finally, the manner in which the neuron utilizes this energy availability to carry out its integrate-and-fire duties in the brain.  Through an intensive analysis of the results of running these simulations combined with an exhaustive review of the relevant literature relating to neuronal-cellular metabolism and spiking behavior, we have come to the tentative conclusion that the human brain manages its energy resources by supplying cooperative regions of the cerebral cortex with a cooperatively cycled availability of nutrients (e.g., glucose and oxygen) which aids in the capacity of these cooperative regions to maintain a similar rate of the restoral of the resting membrane potentials in the constituent neurons that populate those cooperative interacting regions. The reason such cooperativity is important is that information is transferred about the brain in the form of spatially amplitude-modulated (AM) patterns that are defined by their characteristic highly phase-synchronized, narrow-band oscillations. In order to form such highly coordinated activity within and between these coupled cortical regions, it is essential that the energy/ATP concentration be roughly similar in the neurons populating those cooperative regions, seeing as the firing-rate patterns of the constituent neurons in those regions are sensitive to the robustness with which those membrane potentials are reset which, in turn, is driven by the ATP concentrations in those neurons. The CAN model developed by us includes a metabolic system coupled with a system consisting of a network of neurons. The metabolic system has a vascular input 'vas' and the output from the metabolic system to the neuronal system is governed by a coupling factor 'beta'. Varying 'beta' and 'vas' has an effect on the synchronization index i.e. the extent to which the firings of the neurons is synchronized. An experiment was carried to assess this impact and the results of this experiment can be see in figure 1 below. Another experiment was conducted to assess the impact of 'beta' and 'vas' on Spike Density or the average number of neurons which fire at every time step. The results of this experiment can be seen in figure 2.   We have developed a graphical user interface that accepts values for the model parameters and runs the simulation. In the simulation the overall spiking behaviour of the entire network and the spiking activity of a single spiking neuron can be observed. The simulation also displays the amplitude-synchronization index and spike-density (average number of neurons that fire every time step). The future direction in our neuroenergetics division of the BINDS lab will be to continue to refine the CAN model and ultimately to provide a reliable “bottom up” microscopic compliment to the widely used macroscopic “top down” fMRI technique of studying cortical cognition in humans through an analysis of slow-wave form fluctuations in the CBF patterns across the human cerebral cortex and even whole brain in general. Through such efforts, we are hoping that our model will provide the groundwork through which the future development of neural network and artificially intelligent hardware architectures can be designed in a fashion that exploits the energy efficient mechanisms found in the biological human brain. |